The Demo Problem: The “Vibe” vs. The “System”

In 2026, the novelty of an AI agent answering a question has evaporated. Every developer can string together a “Hello World” demo using the latest Anthropic or OpenAI SDK. These demos usually look flawless on LinkedIn: the agent reads a PDF, summarizes it, and perhaps even “books a flight” in a mock environment.

However, the “Demo-to-Production Gap” is wider than ever. When these agents hit real users, they encounter edge cases that a notebook can’t simulate:

- Prompt Injection & Tool Abuse: An agent given a “search_database” tool is tricked into dropping tables.

- The Cost Spiral: A single user query triggers a recursive loop, costing $15 in tokens before the safety timeout kicks in.

- Context Drifting: The agent forgets the user’s original intent because the context window is stuffed with irrelevant tool outputs.

- The Black Box: A high-value customer receives a nonsensical answer, and the engineering team has zero logs to explain why the agent chose that specific tool path.

Building for production in 2026 means treating the LLM as a non-deterministic CPU — it is a powerful but volatile component that must be wrapped in rigorous, deterministic software engineering.

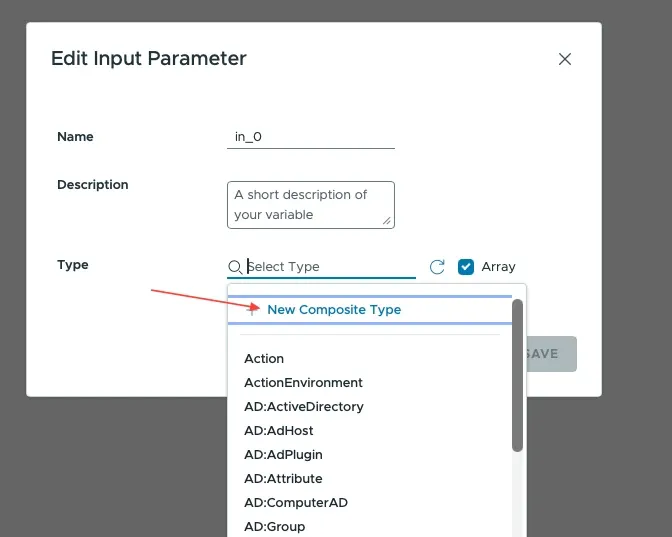

1. Tool Design: Least Privilege and Strong Contracts

The primary way an agent interacts with the world is through tools. In early development, it’s tempting to give an agent broad tools like execute_python or access_web. In production, this is a security and reliability nightmare.

The Golden Rule of Tooling: Every tool must have a Strict Schema and Narrow Scope.

Instead of a generic database_query tool, you should build highly specialized tools with Pydantic validation. This ensures the LLM cannot pass “hallucinated” parameters that break your backend.

Example: Validated Tool Contracts

By using Negative Constraints in your tool descriptions (e.g., “Do NOT use this for price negotiations”), you provide the “guardrails” the LLM needs to make better routing decisions during its reasoning phase.

2. Memory Architecture: The Tiered Approach

In 2026, models like Claude 4 and GPT-5 have massive context windows, but “stuffing the prompt” is still a bad architectural choice. It increases latency (Time to First Token) and creates “lost in the middle” phenomena where the model ignores crucial data.

A production agent uses a Tiered Memory System:

- Hot Memory (In-Context): The last 3–5 turns of the conversation.

- Warm Memory (Summary): A compressed summary of the conversation before the hot memory window.

- Cold Memory (Vector Store): Semantic retrieval of relevant facts from previous sessions months ago.

Implementing a Sliding Window with Summarization

def manage_context(messages: list, threshold: int = 10) -> list:

if len(messages) <= threshold:

return messages

# Take the oldest messages that exceed our 'Hot' threshold

to_summarize = messages[:-5]

summary = call_summarization_model(to_summarize)

# Reconstruct the prompt: System + Summary + Hot Turns

new_context = [

{"role": "system", "content": f"Previous conversation summary: {summary}"}

] + messages[-5:]

return new_context3. Grounding: Eliminating Hallucinations with RAG

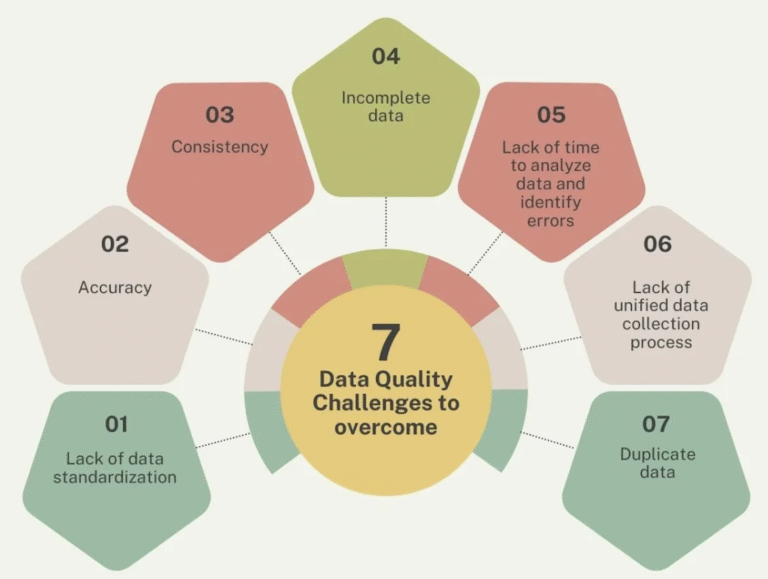

Hallucination isn’t a lack of intelligence; it’s a side effect of the model trying to be helpful without enough data. To ship to production, you must implement Hard Grounding.

You must enforce a “Knowledge-First” policy. If the vector database doesn’t return a high-confidence match, the agent should be programmed to admit ignorance rather than guessing.

4. The Agentic Loop: Managing Multi-Step Reasoning

A production-ready agent uses an Iterative Loop where it can think, act, observe, and re-evaluate. The danger here is the “Infinite Loop” where the agent keeps trying a failing tool.

Key Guardrails for the Loop:

- Max Iterations: Never let an agent run more than 5–10 steps.

- Token Budgets: Kill the process if a single task exceeds a cost threshold (e.g., $0.50).

- Human-in-the-Loop (HITL): If the agent’s “confidence” score drops or it repeats a tool call 3 times, escalate to a human.

The Iterative Agent Pattern

def run_agent_loop(user_input: str):

context = build_initial_context(user_input)

for i in range(MAX_ITERATIONS):

response = llm.generate(context, tools=available_tools)

if response.is_final_answer:

return response.text

if response.tool_calls:

results = execute_tools(response.tool_calls)

context.append({"role": "tool", "content": results})

return "I'm sorry, I couldn't resolve this in the allotted steps."5. Observability: Tracking the “Chain of Thought”

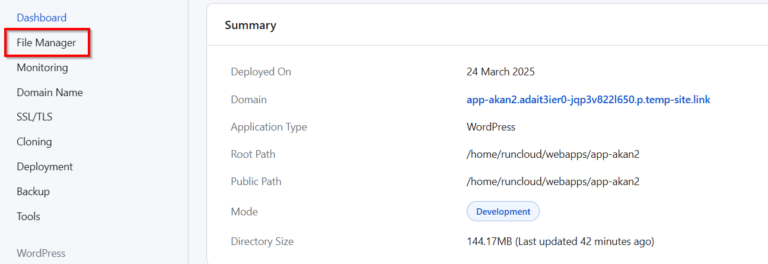

You cannot debug an AI agent using standard stack traces. When an agent fails, you need to see the Trace: the specific sequence of reasoning that led to the error.

In 2026, tools like LangSmith, Arize Phoenix, or custom OpenTelemetry implementations are mandatory. You should log:

- The “Thought” vs the “Action”: What did the model say it was going to do vs what it actually did?

- Per-Step Latency: Which tool is slowing down the UX?

- Token Usage per Step: Is the agent becoming more “verbose” over time?

Trace Data Structure Example

{

"trace_id": "agent_8821",

"steps": [

{

"step": 1,

"action": "search_docs",

"input": "How to reset password?",

"output": "Found Article #402",

"latency": "450ms"

},

{

"step": 2,

"action": "final_response",

"content": "You can reset your password by...",

"tokens": 142

}

],

"total_cost_usd": 0.0042

}6. Resilience and Graceful Degradation

Production systems fail. In the world of agents, failure looks like the model becoming unresponsive or the API hitting a rate limit.

Strategies for Agent Resilience:

- Model Fallbacks: If

Claude-3.5-Sonnettimes out, failover toClaude-3-Haiku. It might be less “smart,” but it’s better than a 500 error. - Output Parsers: Never trust the LLM to return valid JSON. Always wrap your response handling in a

try-exceptblock that asks the model to “Correct your formatting” once before failing. - Semantic Caching: Use a tool like GPTCache to store responses to common questions. If a user asks a question that was answered 5 minutes ago, serve the cached version to save 100% of the cost and 95% of the latency.

Summary: The Checklist for Shipping

Before you move your agent from dev to prod, ensure you can check these boxes:

- [ ] Validation: All tool inputs are validated by Pydantic/Typebox.

- [ ] Rate Limiting: Users cannot spam the agent and drain your API credits.

- [ ] Security: Tools follow the principle of “Least Privilege.”

- [ ] Monitoring: You have an active dashboard showing cost-per-query and hallucination rates.

- [ ] Fallback: The agent has a “Self-Correction” loop for malformed outputs.

The difference between a toy and a tool is reliability. In 2026, the “cool” factor of AI is gone; only the systems that consistently deliver value without breaking will survive.