As AI inference moves from prototype to production, Java services must handle high-concurrency workloads without disrupting existing APIs. This article examines patterns for scaling AI model serving in Java while preserving API contracts. Here, we compare synchronous and asynchronous approaches, including modern virtual threads and reactive streams, and discuss when to use in-process JNI/FFM calls versus network calls, gRPC/REST. We also present concrete guidelines for API versioning, timeouts, circuit breakers, bulkheads, rate limiting, graceful degradation, and observability using tools like Resilience4j, Micrometer, and OpenTelemetry.

Detailed Java code examples illustrate each pattern from a blocking wrapper with a thread pool and queue to a non-blocking implementation using CompletableFuture and virtual threads to a Reactor-based example. We also show a gRPC client/server stub, a batching implementation, Resilience4j integration, and Micrometer/OpenTelemetry instrumentation, as well as performance considerations and deployment best practices. Finally, we offer a benchmarking strategy and a migration checklist with anti-patterns to avoid.

Problem Statement and Goals

Modern AI/ML models often demand massive concurrency and heavy compute resources. Legacy monolithic inference doesn’t scale to production levels. As one author notes, the era of the monolithic AI script is over; successful deployments now use distributed, containerized microservices under orchestration. Java backend teams face two main challenges:

- Scale: Handle spikes of inference requests, including batching, GPU and CPU placement, and efficient resource use.

- Stability: Maintain existing API contracts, enforce SLAs, and prevent cascading failures when models or downstream systems misbehave.

Our goal is to serve AI inference from Java services while maximizing throughput and resource efficiency, while preserving API semantics. We explore concurrency models (blocking vs non-blocking), serving architectures (in-process JNI/FFM vs. gRPC/REST microservices), and reliability patterns. Throughout, we emphasize stable APIs, e.g., versioning strategies, backward compatibility, and graceful fallbacks if a model or infrastructure fails. Finally, we discuss operational topics (autoscaling, canary model deployment, observability) and provide detailed Java code samples.

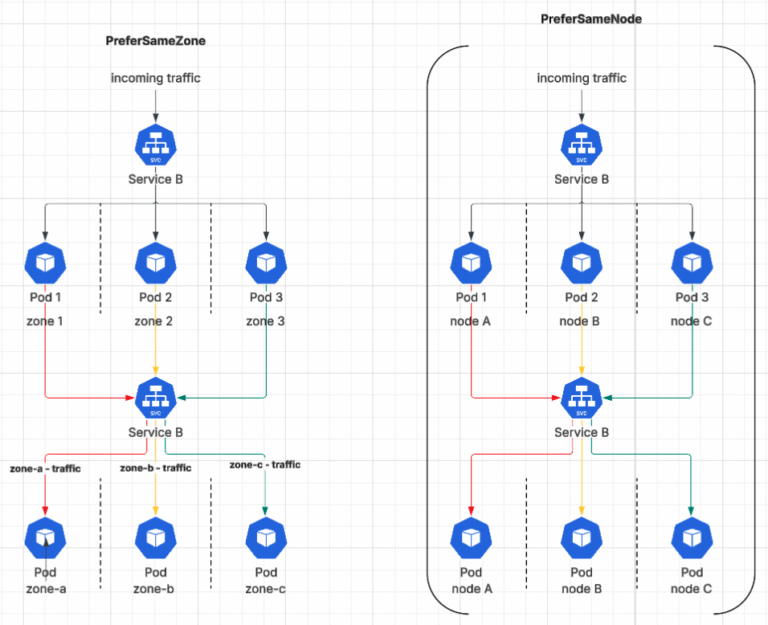

Architectural Patterns

AI serving can follow multiple architectural patterns. Each pattern has trade-offs. For example, virtual threads simplify code by writing synchronous-looking code yet internally multiplex on a few OS threads, making them ideal for high-concurrency I/O tasks. Reactive streams yield efficient non-blocking pipelines with backpressure, but they require a reactive framework. Network RPC style (gRPC) gives the fastest cross-service calls, whereas REST is simpler but slower. In-process calls via JNI/FFM avoid protocol overhead but require careful use of off-heap memory.

Design Guidelines

API Versioning/Backwards Compatibility

Always version your inference APIs so clients aren’t broken by model changes. Follow semantic versioning, add minor versions for new optional features, and only bump the major version for incompatible changes. Clearly document deprecated fields or endpoints. Prefer additive changes over removing fields. Support concurrent versions when possible. Use strict test contract tests across versions to ensure old clients still work.

Timeouts and Retries

Never let an inference call hang indefinitely. Configure timeouts on all client calls. For example, use Resilience4j’s Time Limiter or simply Future. Get to enforce a max latency. Use retries only for idempotent or safe-to-repeat calls and with exponential backoff to avoid spikes. Always cap retries; uncontrolled retries can worsen outages.

Circuit Breakers and Bulkheads

To prevent cascading failures, wrap model calls with a circuit breaker. Once failures exceed a threshold, open the circuit and fail fast instead of queuing requests on an overloaded model. Bulkhead patterns isolate resources. Resilience4j supports a SemaphoreBulkhead and a ThreadPoolBulkhead. Bulkheads prevent one API endpoint from exhausting all threads.

Rate Limiting

Enforce rate limits per client or API key to protect your model service. Rate limiting is imperative to prepare your API for scale, ensuring high availability by rejecting or queuing excess requests. For example, Resilience4j’s RateLimiter allows N calls per second with a configurable timeout for how long a request will wait. When the limit is exceeded, return 429 Too Many Requests or queue, rather than overload the backend.

Graceful Degradation and Fallbacks

Build fallbacks in case the model or service is unavailable. A simple fallback might be a cached or default prediction. For example, if a heavy ML model fails, you could return a simpler heuristic result rather than erroring. In the UI, communicate degraded mode to users. This maintains functionality even if AI inference is offline.

Observability and Logging

Instrument everything. Use Micrometer or OpenTelemetry to export metrics. Resilience4j integrates with Micrometer out of the box, letting you bind circuit-breaker and bulkhead metrics to a MeterRegistry. For tracing, create spans around inference calls and propagate context. Include request IDs (correlation IDs) in logs to tie requests across components. Collect logs at INFO and ERROR levels. For example, use a Timer metric around model calls and a Counter for total requests.

Synchronous Inference With Thread Pool and Queue

A classic approach uses a bounded thread pool and queue to handle blocking model calls. This prevents unbounded thread growth and back pressure from queuing excess tasks.

- We use a

ThreadPoolExecutorwith a bounded queue (100). If the queue is full, theCallerRunsPolicy()causes the submitting thread to execute the task. You could useAbortPolicy()to throwRejectedExecutionException. - The

predictSyncmethod blocks until the result is ready or a timeout occurs. We catchTimeoutExceptionand return a fallback. - This synchronous style is simple, but each request occupies a thread during inference. It’s suitable if requests are moderate and we can tune the pool size.

Asynchronous With CompletableFuture and Virtual Threads

Using CompletableFuture and virtual threads, we can avoid manually managing pools. Virtual threads let us write “synchronous” code with billions of threads on the cheap.

Here, each call to supplyAsync spins up a virtual thread for the lambda. According to Oracle docs, a single JVM “might support millions of virtual threads”, so this scales far beyond traditional threads. The code stays simple, just blocking infer() inside, but the scheduler will unblock and remap threads when waiting. This is ideal for highly-concurrent I/O-bound inference. For CPU-bound tasks, combine with controlled parallelism.

By following these patterns and examples, you can build a Java service that scales AI inference efficiently while keeping your APIs robust and stable. The combination of modern Java features and established resiliency libraries ensures that AI workloads perform well without throwing away hard-earned API contracts.