If your IDE can whip up a feature in minutes, why do releases still get delayed, incidents keep popping up, and PR queues stay backed up?

That’s the big question driving vibe coding. The idea sounds great: just say what you want in plain language, have an LLM generate the code, copy it over, run it, and then you’re ready to go. It feels like software has finally turned into something that puts English first.

In actual software development lifecycles (SDLCs), writing code is hardly ever the slowest part. The main issues are clarity, correctness, integration, security, operability, and managing change. Vibe coding speeds up one small part of the process but quietly brings more risk and extra work later on.

This article is a straightforward guide meant for experienced builders:

- What vibe coding is and why it’s everywhere

- When it actually helps and when it ends up making things worse

- Common concrete mistakes and how to spot them

- A group of GenAI techniques that help speed up and improve quality throughout the entire SDLC, not just the code output.

What Is ‘Vibe Coding’?

Vibe coding is basically the idea of getting AI tools to write code for you instead of doing it yourself. You kind of rely on what the AI spits out and keep tweaking your prompts until the code actually does what you want.

Google Cloud talks about “pure” vibe coding as basically forgetting the code is even there. That’s why it works best for quick ideas and projects you don’t plan to keep. But once you start treating that code like it’s for real, long-term use, things can get risky.

That way of looking at it matters because there’s an important difference:

- Pure vibe coding means just keeping it simple: “Make it run.” Minimal review. Minimal tests. Minimal ownership means having just enough control or responsibility over something without taking on too much.

- Responsible AI-assisted development is when AI handles the initial creation, but people double-check everything: running tests, doing reviews, following standards, and making sure it’s ready to go.

Most teams begin like the first example but end up deploying it more like the second one by mistake.

Why Vibe Coding Feels Like a Superpower

When you’re in that zone, everything just clicks. The words flow, the ideas come fast, and it’s almost like the code writes itself. You’re not just typing, you’re creating something real, something that works exactly how you imagined. Vibe coding is no joke. It works, especially when the constraints aren’t too strict.

Where It Really Stands Out

- Prototyping and spikes include things like UI mock flows, proofs-of-concept, quick scripts, and demo endpoints.

- Setting up CRUD endpoints, DTOs, basic validation, and all the repeated wiring feels like dealing with boilerplate and scaffolding.

- When you’re dealing with unfamiliar stacks, such as “Show me the idiomatic way to do X in framework Y,” people usually want to see how things are done naturally within that framework.

- Cross-functional collaboration with product/design teams to explain what they want to achieve, while engineers work out the details and make it happen.

There’s a good reason why AI code assistants do well in controlled experiments.

But that’s just one piece of the whole story.

The Hidden Cost: Why Just Vibe Coding Won’t Deliver Real Value in the SDLC

A software organization doesn’t make money just for producing code. It gets paid when reliable changes are made safely.

Vibe coding usually focuses on improving local things like keystrokes or how fast you get a running demo, but it can end up hurting overall system measures like how many defects slip through, how often changes fail, how much work reviews take, and how easy it is to maintain the code over time.

These are the most common ways things tend to go wrong.

Pitfall 1: Just Because Something Runs Doesn’t Mean It’s Actually Correct

LLMs do a good job at coming up with code that sounds right. They don’t always write correct code, especially when it comes to tricky edge cases or how different parts fit together.

Unexpected impact: Sometimes when your team speeds up shipping, it means they end up spending extra time in QA, dealing with incidents, and reworking code later on. You don’t get rid of work; you just shift it to a later time when it costs more.

Practical Guardrails

Guardrails help keep things on track and prevent problems before they happen. They’re simple rules or limits that make sure everything runs smoothly without getting too complicated.

- Consider something “done” when the tests pass, the lint is clear, the review is approved, and the security checks come back clean.

- Put these in CI, so vibe-coded changes can’t slip past them.

- Unit tests like pytest, JUnit, and Jest are used to check small pieces of code to make sure they work as expected.

- Lint and format tools like ruff, eslint, prettier, and gofmt help keep code clean and consistent.

- Type checks like mypy and tsc help catch errors in your code before you run it.

- Static analysis tools like SonarQube and Semgrep help find issues in code without running it.

- Security scanning with tools like CodeQL, Snyk, Dependabot, and Trivy helps catch issues early.

Pitfall 2: Security Debt Comes Built In From the Start

There’s research showing that AI-generated code can often have vulnerabilities more than we’d like. A well-known study on GitHub Copilot showed that about 40% of the programs it generated in security-related situations had vulnerabilities.

If your organization already sends out code with vulnerabilities, which is common, vibe coding can make things worse. It tends to increase the amount of code and lowers the careful review each piece gets.

Here’s a simple example: an SQL injection “by vibes.”

A model could come up with something like:

query = f"SELECT * FROM users WHERE email = '{email}'"

cursor.execute(query) It works. It’s also a common risk when it comes to injections.

How to Defend

- Include secure-by-default templates in your prompts and repo.

- Always use parameterized queries instead of string concatenation.

- Add timeouts to outbound HTTP calls so they don’t hang forever if the server doesn’t respond.

- Check and clean inputs before using them.

- Use scanners that really spot these patterns:

- CodeQL is especially handy if you’re already using GitHub Advanced Security.

- Semgrep rulesets for injection patterns help catch code that might be vulnerable to injection attacks.

- Dependency scanners are tools that check your software packages to find any vulnerabilities. They help keep your code safer by spotting weak spots in the libraries or components your project relies on.

Pitfall 3: Productivity Gains Don’t Happen Everywhere and Can Even Go Backward

Here’s where it gets tricky: vibe coding might actually slow down experienced developers when they’re working on real-world codebases.

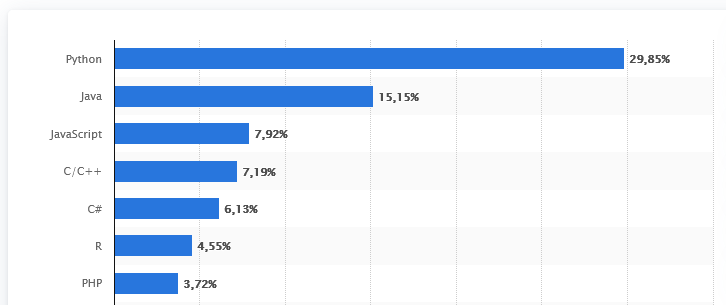

A 2025 randomized controlled trial by METR showed that experienced open-source developers using early-2025 AI tools actually spent 19% more time finishing tasks in their own repositories.

Reuters’ coverage points out an interesting detail: developers thought AI would help them work about 24% faster before using it and about 20% faster after starting, but in reality, their task completion time went up by 19%. This was mainly because they had to spend extra time checking and fixing the AI’s suggestions.

So yeah, you can move more quickly when the task is clear, fresh, and well-defined. But in older systems, the “trust tax” can really take over.

Here’s what SDLC leaders need to know: you can’t simply “switch on AI” and expect productivity to improve right away. You need to change how the workflows are set up.

Pitfall 4: Agents and Tools Can Create New Ways for Attacks to Happen

As teams shift from just chatting inside the IDE to using agentic workflows – tools that can actually run commands, edit files, and open PRs – the risks involved start to change.

OWASP’s Top 10 for LLM Applications points out prompt injection and similar risks, especially when models take in external stuff like files, documents, or web pages.

And we’re already seeing actual incidents and vulnerabilities in code-agent workflows. Ars Technica reported a flaw in Google’s Gemini CLI coding tool that could let attackers run commands on user devices — this shows why “LLM with shell access” really needs strong guardrails.

So, What Really Brings Out the True GenAI Value Throughout the SDLC?

Here’s the main idea: Vibe coding helps get code written faster. The real value of the SDLC is speeding up feedback and cutting down on defects.

You get lasting gains when you use GenAI to cut down the time between:

- Turning an idea into a clear, workable plan/spec

- Spec turned into a tested implementation

- Implementation into the reviewed merge

- Merge then safe deploy

- Deploy leads to observable outcomes

Here are some techniques that regularly help improve speed and quality.

Technique 1: Start With a Clear Spec by Turning Plain English into Contracts Instead of Writing Code Right Away

Before you ask for implementation, have the model produce:

- Acceptance criteria that are the specific conditions that a product or project must meet to be considered complete and acceptable by the stakeholders.

- Edge cases, which are situations that don’t fit the usual patterns or rules.

- Data contracts are like agreements that define the structure and rules data must follow, such as schemas and invariants.

- Non-functional requirements like latency, reliability, and auditability.

- Threat model means thinking about what could possibly go wrong.

Then save it as an executable file:

- OpenAPI, also known as Swagger, for API specifications

- JSON schema and Protobuf are both ways to define the structure of data

- ADRs, or Architecture Decision Records, are used to keep track of design constraints in a project.

Prompt pattern:

- “Create an OpenAPI specification for this endpoint, and make sure to include possible error responses.”

- “Now go ahead and create an implementation that follows this spec.”

- “Now create tests that show it meets the standards.”

This changes GenAI from just a source of code into something that actually enforces contracts.

Technique 2: Test-First AI, Where the Model Has to Earn Its Way Into the Merge

If you pick just one habit to start with, let it be this one.

- First, have AI create tests for both unit and integrations

- Run them and see them fail

- Ask AI to keep working on it until the tests pass

- Keep tests. Don’t be afraid to toss out code when it’s not working

Tools that help get this done:

- Using pytest with hypothesis is like having a shortcut in testing since property-based testing makes things a lot easier

- Jest and Vitest are both popular testing tools for JavaScript and TypeScript

- Using Playwright and Cypress to test end-to-end UI flows

This works because you’re shifting from just vibes to actual, measurable behavior.

Technique 3: Tie the Model Directly to Your Codebase Because RAG Works Better Than Just Guessing Blindly

A lot of so-called “LLM mistakes” are really just cases where the context wasn’t understood properly.

- Wrong dependency versions

- Wrong conventions

- Helpers that have been reinvented and were already here

- Repeated business logic

Use workflows that are aware of the repo.

- IDE assistants like Copilot Chat and Cursor that understand the project context can really help make coding smoother

- Internal docs are indexed so they can be easily retrieved using RAG

- Prompting with something like “here are the canonical patterns in this repo”

Your goal is to keep the model simple and predictable: same patterns all the way through, same structure every time.

Technique 4: Using AI to Help Review and Clean Up Code — It’s a Smart Move With Lots of Benefits and Not Much Risk

Writing completely new code usually comes with more risks than just fixing or improving what’s already there.

Use cases that bring a good return on investment:

- Rewrite to make it easier to read and maintain.

- Create plans for migration that cover framework upgrades and API versioning.

- Summarize PRs and point out risky diffs.

- Can you point out any areas we might have missed in the tests?

GitHub ran research similar to a randomized controlled trial on Copilot and found that it improved code quality in some areas. They were 53.2% more likely to pass all the unit tests in their study task. There were small but clear improvements in how easy the text is to read, how reliable it is, how easy it is to maintain, and how concise it became, and a 5% better approval rate in blind reviews.

It’s good to be skeptical of vendor studies — it shows you’re thinking critically. The key point is clear: real improvements in quality happen when developers take the extra time they save and focus on refining their work, not just pushing out more code.

Technique 5: Treat the AI Model as If It’s Untrusted and Make Sure It’s Secure by Default

A simple starting point for GenAI DevSecOps.

- Always make sure agents get your okay before running any commands.

- Sandbox file system access allows programs to work within a limited area of your computer’s storage, keeping them from affecting other parts of the system.

- Don’t put secrets directly into prompts. Instead, use secret managers or redact sensitive information to keep it safe.

- Make sure to run SAST, DAST, and dependency scans in the CI process without skipping any.

- Include AI threat modeling in design reviews to cover issues like prompt injection, too much autonomy, and data leaks.

Where Vibe Coding Still Fits In

Vibe coding definitely has its place in:

- Temporary prototypes

- Personal tools

- Hackathon demos

- Learning exercises

Just remember, saying “I can generate it” isn’t the same as “we can operate it.”

Once code goes live, it turns into a long-term liability unless you can:

- Test it

- Lock it down

- Keep it up

- Watch it

- Explain it

A Straightforward Checklist: Covering Everything From Vibes to Value

If you want GenAI to actually help improve the SDLC and not just spit out code, start by adopting it in this order:

- CI quality gates include tests, linting, type checks, and security scans.

- Spec-first and test-first prompting are two ways to guide how you work with a system.

- Repo-grounded context refers to assistants that are aware of specific projects or repositories they’re working with.

- AI should be used not only for writing new code but also for reviewing and improving existing code.

- Agent guardrails include things like permissions, approvals, and sandboxing.

- Focus on measuring results like lead time, rework, and incidents, rather than just the amount of work done.

The Bigger Story

The world is clearly shifting toward more AI-generated code. Google even said that over a quarter of its new code comes from AI and is then checked by engineers.

That doesn’t mean you should just go along with the vibes. It shows that engineering judgment, verification, and operational discipline are starting to become rare skills.

Vibe coding can help you reach a demo. A GenAI workflow that focuses on quality and feedback helps you build a system you can depend on.